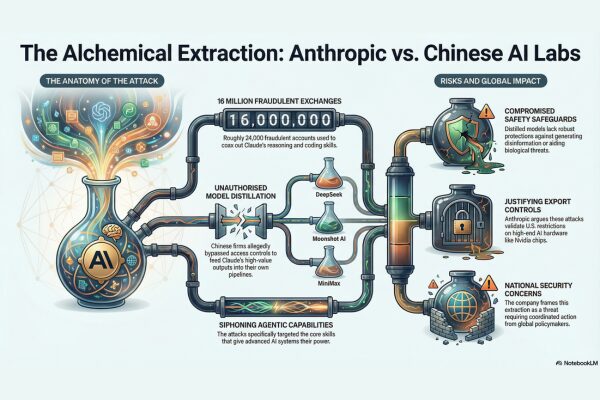

Anthropic, the U.S. AI company best known for its Claude large language model, has claimed that that three Chinese AI firms — DeepSeek, Moonshot AI, and MiniMax — ran what it describes as industrial-scale “distillation attacks” against Claude, siphoning its intellectual property to train their own systems.

In an industry blog post shared on February 23, Anthropic said it had identified roughly 24,000 fraudulent accounts that together made more than 16 million exchanges with Claude. These interactions, it claims, were not ordinary user queries but carefully constructed sequences designed to coax out specific reasoning, coding, and agentic capabilities — the very skills that give Claude and similar advanced AI systems their power.

The technique at the centre of the allegation — known as distillation — is familiar in AI research. Legitimately used, it helps developers train smaller or cheaper models by teaching them from the outputs of larger ones.

But Anthropic says the way these Chinese companies deployed the method crossed a line: using unauthorized access to extract high-value outputs from Claude and then feeding those responses into their own model training pipelines. Because Claude isn’t commercially available in China, Anthropic suggests these actors had to circumvent access controls and terms of service to do so at scale.

Anthropic warns that such “illicitly distilled” models would lack the robust safety and misuse safeguards engineered into its own systems. Without protections against harmful uses — for example, generating disinformation, aiding cyberattacks, or even helping design biological threats — these copied capabilities could spread widely without oversight. The company frames this not only as competitive theft but also as a national security concern requiring coordinated action from industry and policymakers.

The accusations arrive amid ongoing debates in the U.S. over export controls on advanced AI chips, especially those made by Nvidia, intended to limit frontier tech from reaching Chinese developers. Anthropic argues that large-scale distillation attacks further justify such controls, since the computing horsepower needed to run them — even indirectly — still depends on access to high-end hardware.

Public response has been mixed. Some commentators welcomed Anthropic’s transparency about what it views as a new kind of competitive threat in AI; others pointed out that training large models inevitably involves using vast datasets and outputs, raising thorny questions about where to draw the line between legitimate research and unauthorized extraction.

The controversy even drew high-profile voices like Elon Musk, who posted on social media that Anthropic itself has faced serious copyright disputes over the datasets used to train Claude and similar models — a reminder that AI ethics debates cut across all sides of the industry.

Anthropic says it is responding by improving detection systems for distillation-style exploitation and urging broader industry cooperation to stem such campaigns — but the episode underscores how AI innovation, intellectual property, and geopolitics are increasingly entwined in ways that go far beyond code and models.